Table of Contents

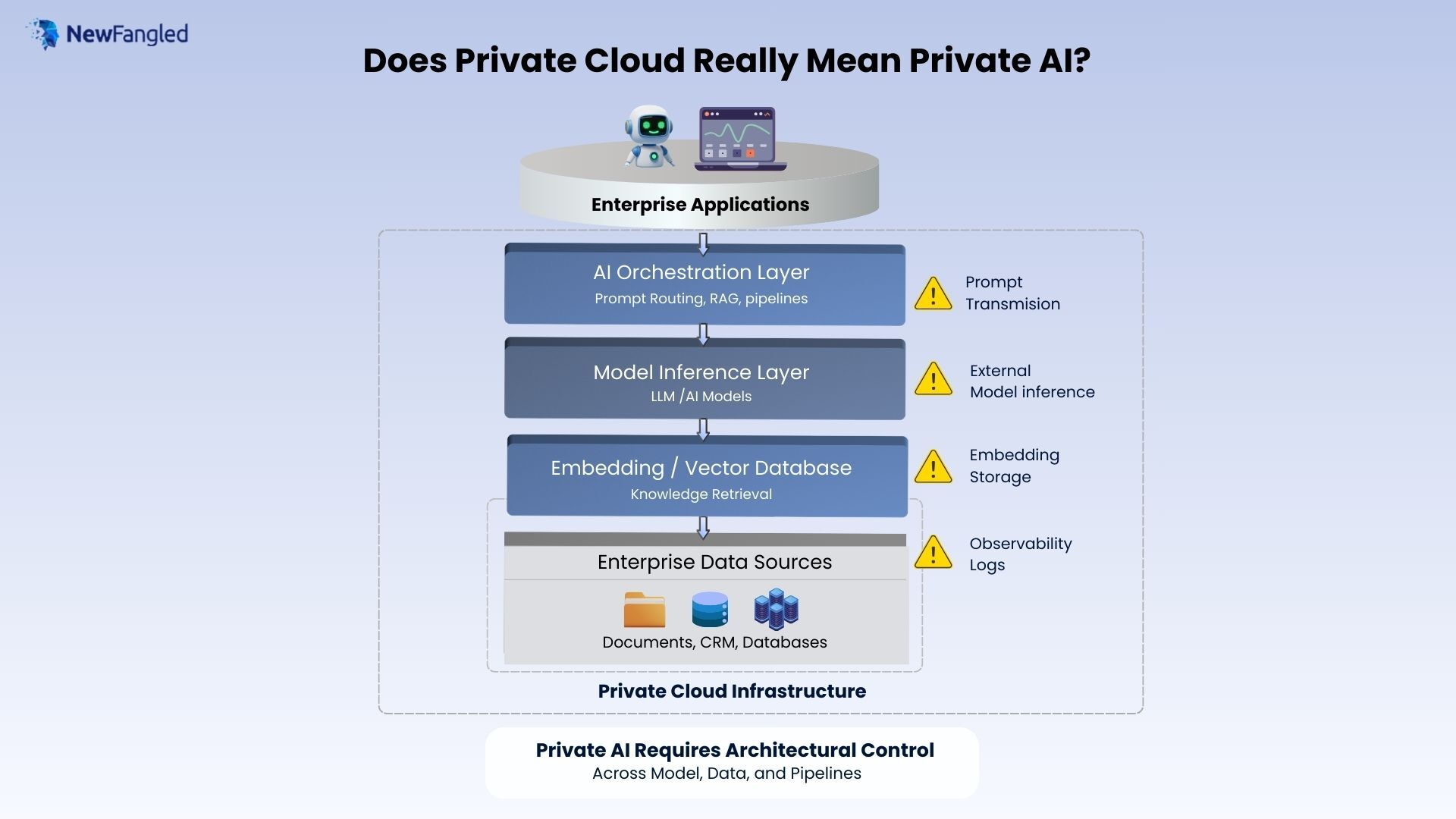

Does Private Cloud Really Mean Private AI?

Introduction: The Misconception Enterprises Are Facing

A large enterprise recently deployed an internal AI assistant designed to help employees search company knowledge and automate workflows. The application was hosted inside the organization’s private cloud environment, protected by corporate identity systems and enterprise security policies.

At first glance, the deployment appeared secure.

However, engineers later discovered that the AI assistant was sending prompts containing sensitive internal data to an external model API. Although the application infrastructure was private, the AI processing pipeline extended beyond the organization’s boundaries.This scenario illustrates a growing misconception in enterprise AI adoption: private cloud does not automatically mean private AI.

From the perspective of NewFangled Private Enterprise GenAI Architecture, privacy in AI systems is determined not only by infrastructure location but also by how models, data pipelines, and inference processes are designed and governed.As enterprises increasingly adopt generative AI technologies, understanding this architectural distinction becomes critical for maintaining data security, regulatory compliance, and operational trust.

Why Enterprises Confuse Private Cloud with Private AI

For decades, enterprise IT strategies have relied on infrastructure control to protect sensitive workloads. Moving systems into private cloud environments allowed organizations to enforce strict network policies, access controls, and data protection standards.This approach worked effectively for traditional applications.However, generative AI systems introduce additional layers of complexity. AI applications rarely operate as standalone software services. Instead, they function as distributed intelligence systems composed of multiple components working together.

These components may include:

- orchestration frameworks

- external model APIs

- vector databases

- retrieval pipelines

- telemetry systems

- training environments

Even when the core application runs inside private infrastructure, other elements of the AI system may still operate externally. The NewFangled Private Enterprise GenAI Architecture approach highlights that privacy in AI systems must be evaluated across the entire architectural stack rather than solely at the infrastructure layer.

The Enterprise AI Stack: Beyond Infrastructure

To understand why private cloud does not automatically create private AI, it is useful to examine the layers that typically compose enterprise generative AI systems.

Application Layer

The application layer represents the interface through which users interact with AI capabilities. Examples include internal copilots, automated decision assistants, knowledge search tools, and AI-powered analytics dashboards. These applications often run within enterprise infrastructure environments such as private cloud platforms. However, the application layer itself does not perform the intelligence functions that power generative AI.

AI Orchestration Layer

The orchestration layer manages how AI workflows operate. It coordinates prompt generation, model selection, retrieval pipelines, and response assembly. This layer determines which models are used and where requests are sent. In many enterprise deployments, orchestration frameworks connect applications to external AI services. Within NewFangled Private Enterprise GenAI Architecture, controlling the orchestration layer is essential because it governs how enterprise data flows through AI systems.

Model Inference Layer

The inference layer is responsible for executing AI models and generating outputs. Organizations may rely on external model providers, dedicated enterprise AI platforms, or internally hosted models. If prompts containing sensitive enterprise information are transmitted to external inference services, data may leave the enterprise environment. The NewFangled Private Enterprise GenAI Architecture perspective emphasizes that model control is one of the most important determinants of private AI.

Embedding and Vector Layer

Many generative AI applications use embeddings to represent enterprise data in vector format. These embeddings are stored in vector databases and enable semantic search and retrieval. While embeddings appear abstract, they often encode information derived from sensitive documents and internal datasets. Without proper governance, vector storage can become another source of potential data exposure.

Enterprise Data Layer

Enterprise AI systems rely heavily on internal data sources.

These sources may include:

- knowledge bases

- CRM systems

- operational databases

- analytics repositories

- document archives

Protecting enterprise data requires architectural safeguards that control how AI pipelines access and process information. The NewFangled Private Enterprise GenAI Architecture framework treats data governance as a foundational element of AI system design.

Infrastructure Layer

Finally, infrastructure provides compute resources, storage systems, and networking capabilities.Private cloud environments can deliver significant advantages in terms of security, scalability, and operational control. However, infrastructure alone cannot enforce privacy across AI workflows.

The NewFangled Private Enterprise GenAI Architecture approach therefore focuses on architectural control across all layers of the AI stack.

The NewFangled Private Enterprise GenAI Architecture Framework

To address these architectural challenges, enterprises can evaluate their AI systems using four core control dimensions.

Model Control

Organizations must understand where models are hosted and who manages the inference environment. Model control determines whether prompts and outputs remain within enterprise boundaries.

Data Control

Data control ensures that enterprise information remains protected throughout AI workflows. This includes governance over documents, embeddings, prompts, and training datasets. The NewFangled Private Enterprise GenAI Architecture model emphasizes that enterprise data should always remain within secure, auditable environments.

Pipeline Control

AI pipelines determine how data flows through systems. Pipeline control involves managing prompt routing, retrieval mechanisms, and model access policies. By controlling these pipelines, organizations can enforce policies that protect sensitive information.

Governance Control

Governance mechanisms enable organizations to monitor and audit AI systems.This includes implementing compliance policies, monitoring data access, and maintaining audit trails. Together, these four pillars form the foundation of NewFangled Private Enterprise GenAI Architecture.

Common Enterprise Mistakes When Deploying AI

Organizations frequently encounter several pitfalls when implementing AI systems.

Assuming Infrastructure Equals Privacy : Running AI applications in private cloud environments does not prevent prompts from being processed externally.

Sending Sensitive Prompts to External APIs : Early prototypes often rely on external AI services without sufficient governance.

Storing Embeddings Without Security Controls : Vector databases may inadvertently expose sensitive semantic representations of enterprise data.

Logging Sensitive Data in Observability Systems : Monitoring platforms may capture confidential information unless proper redaction policies are enforced.

The NewFangled Private Enterprise GenAI Architecture framework helps enterprises identify and mitigate these risks.

How Enterprises Should Evaluate Private AI Platforms

Enterprise leaders should ask several key questions when evaluating AI systems:

- Where are prompts processed?

- Who controls model inference environments?

- Where are embeddings stored?

- Are prompts logged externally?

- Can the entire AI pipeline be audited?

These questions reveal whether an organization’s deployment aligns with NewFangled Private Enterprise GenAI Architecture principles.

The Future of Enterprise AI Architecture

As generative AI adoption expands, enterprises will increasingly demand stronger governance and architectural transparency.

Future AI systems will likely emphasize:

- sovereign AI infrastructure

- enterprise-controlled model environments

- secure vector data platforms

- comprehensive governance frameworks

The principles underlying NewFangled Private Enterprise GenAI Architecture will therefore play an increasingly important role in enterprise technology strategy.

Conclusion

Private cloud infrastructure remains a valuable component of enterprise IT environments. It provides secure computing resources and operational control. However, private infrastructure alone does not guarantee private AI.

Generative AI introduces complex data pipelines, model interactions, and distributed processing layers that extend beyond traditional infrastructure boundaries. From the perspective of NewFangled Private Enterprise GenAI Architecture, privacy must be designed into the entire AI lifecycle. Organizations that understand this architectural reality will be better equipped to deploy AI systems that are secure, compliant, and trustworthy.

![]()

I work at NewFangled Vision, a 6-year-old private GenAI startup from India. We build enterprise-grade AI systems without large LLMs or heavy GPU dependence, with a mission to make AI a seamless, must-have capability for every organization—without complexity or hassle.